ELEPHANT BIRD EXHIBIT - A SPATIAL reconstruction

A fisheye frame with a mask (red overlay), removing any unwanted elements (in this case, the operator)

Intro

This project presents an interactive 3D reconstruction of the elephant bird exhibit at Bird Paradise, part of Mandai Wildlife Reserve in Singapore. The scene was captured in a dimly lit indoor rest space and reconstructed through a custom workflow combining 360 capture, photogrammetry, Gaussian splatting, and web-based rendering.

Capture conditions

The exhibit was recorded with an Insta360 X5 using PureVideo mode to preserve as much usable detail as possible in the low-light environment. The capture took approximately seven minutes inside an air-conditioned visitor rest area within Bird Paradise.

Frame extraction and masking

Instead of relying on a stitched 360 output, I extracted frames separately from the front and rear lenses using a custom application of my own design. This avoided artefacts introduced by the artificial seam typically created when the two lenses are merged into a full 360 image or video.

Because I was visible in every frame while carrying the camera, I also developed a custom masking pipeline powered by SAM 3, Meta’s AI segmentation model. This allowed unwanted elements — including the camera operator — to be removed from the dataset before reconstruction.

The dataset consists of 2x672=1344 frames (frames from the front and rear lenses of the 360 camera)

Photogrammetry and training

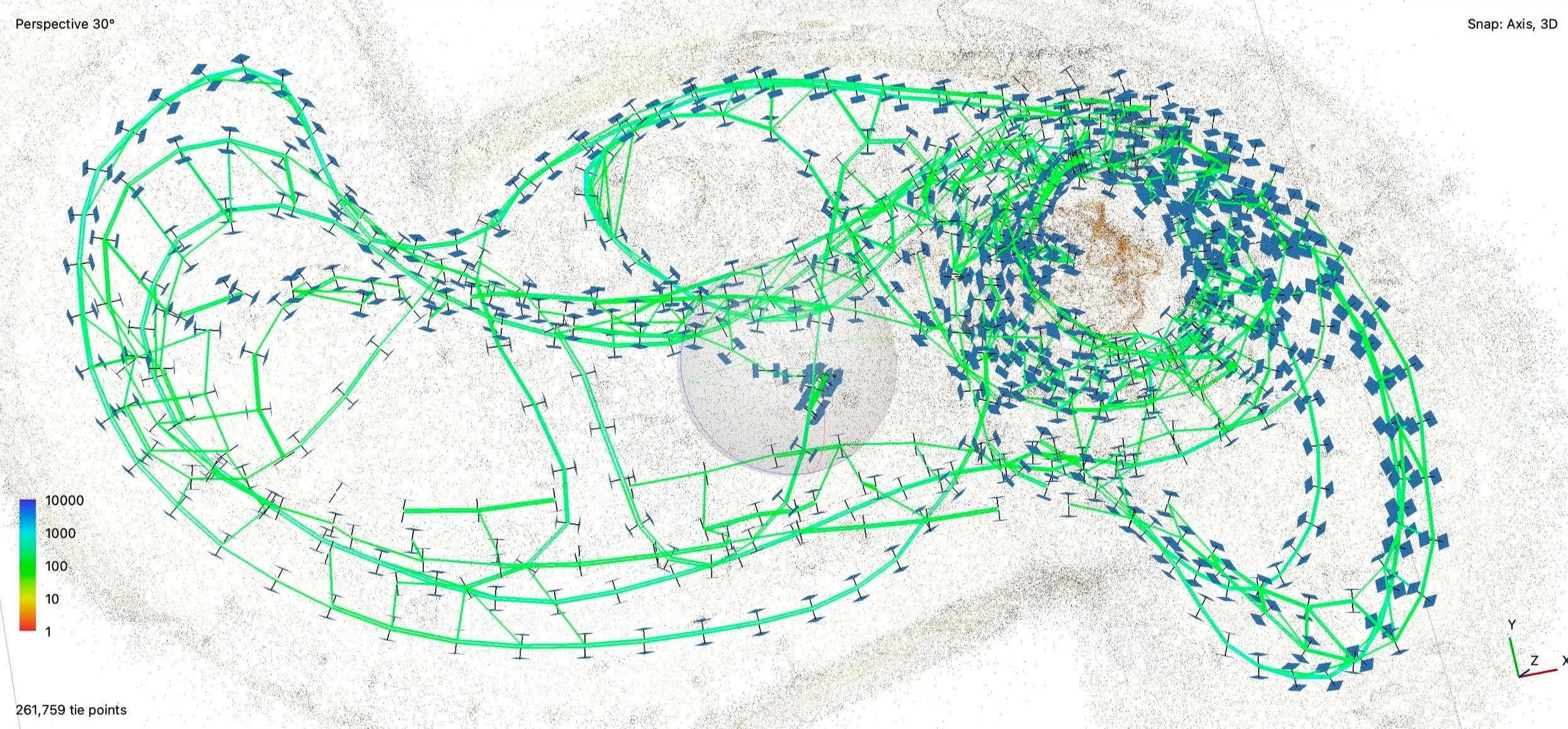

From the video capture, I generated approximately 1,300 fisheye images. These were aligned in Agisoft Metashape, a demanding step due to both the image count and the complexity of fisheye photography, but the full set was successfully aligned.

The aligned camera set was then used to train a Gaussian splat scene in Brush, an open-source Gaussian splat training engine under development by Arthur Brussee. Training was carefully tuned to improve splat coverage and preserve detail throughout the interior space.

The top view of the room with the 1344 camera positions and the camera path (green) in Metashape. (Each front and rear lenses are connected with a line.)

Refinement and web delivery

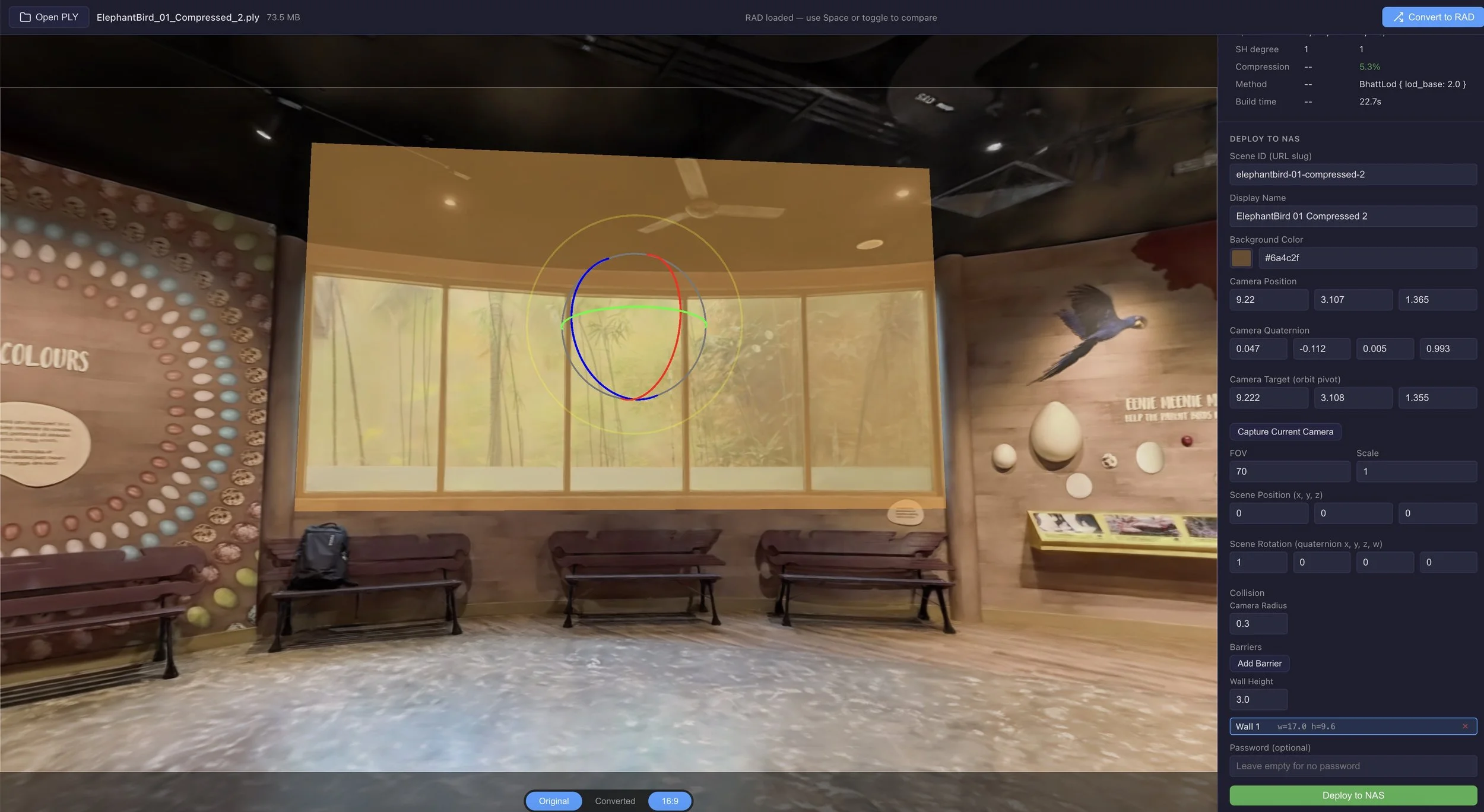

The resulting scene was refined and compressed in SuperSplat, a Gaussian splat editor under development by Will Eastcott. For web deployment, I then converted the scene from PLY to RAD format through a custom workflow based on Spark 2.0, an open-source Gaussian splat renderer from World Labs / SparkJS.

We added a “barrier” wall where there is no Gaussian splat (transparent window) to prevent the user to accidentally exit the room. The barrier wall is a simple 3D plain, invisible during the experience. We can translate, rotate and scale it.

To enhance the sense of physical presence within the scene, I implemented a collision detection system that prevents the camera from passing through walls, floors, and other solid surfaces. During the PLY-to-RAD conversion stage, the tool automatically voxelises the splat data into a compact 3D occupancy grid — a lightweight binary file that encodes which regions of the scene contain dense geometry. At runtime, the viewer loads this grid and checks the camera's position against it before every movement, treating the camera as a small sphere rather than a single point so that it maintains a natural distance from all surfaces. When a direct path is blocked, the camera glides along surfaces instead of stopping abruptly. The collision boundaries are derived from the reconstructed splat geometry, normally requiring no manual modeling or scene annotation. But if it is needed, optional, invisible “barrier” walls can be added in the converter application.

Key innovations and achievements

Separate front- and rear-lens frame extraction from 360 video (bypassing the artificial seam when the two lenses are merged into a full 360 video)

Custom prompt-based masking pipeline powered by SAM 3 (from Meta), an AI segmentation model, for removing unwanted elements

Successful alignment of approximately 1,300 fisheye images in Agisoft Metashape

Splat-density optimisation during Brush training for improved reconstruction quality

SuperSplat-based refinement and compression for deployment efficiency

Custom PLY-to-RAD conversion workflow based on Spark 2.0 web delivery (an open-source Gaussian splat renderer from World Labs / SparkJS)

Automatic voxel-based collision detection derived from splat geometry, with sphere-volume camera and surface-gliding navigation

Optional barrier walls for constraining navigation prior to web deployment

This project proved to be a highly valuable learning experience, deepening my understanding of both photogrammetry and Gaussian splatting across the entire workflow. By using a multi-pass capture method — walking through the space while recording from high, eye-level, and ground-level viewpoints — I was able to improve scene coverage and gain practical insight into how capture strategy affects reconstruction quality. The later stages of extracting frames, masking the camera operator, aligning cameras in Agisoft Metashape, training the scene in Brush, refining and compressing it in SuperSplat, and preparing it for web deployment further strengthened my understanding of each stage of the pipeline. Just as importantly, the technical challenges encountered during the project led me to develop several custom tools, which I intend to refine and expand in the future.

I hope this evolving workflow will support the capture of many more spaces, especially in future heritage-focused projects and collaborations.

The Elephant (bird) in the room…

The Elephant bird is an extinct flightless bird from Madagascar and is considered one of the largest birds known to science. It is especially remarkable for laying the largest shelled eggs ever recorded. Despite its enormous size, its closest living relative is the kiwi, making it a striking example of evolutionary divergence among birds.